In the previous articles, we described the cloud architecture that will be built for this Ironman competition. Starting today, we will introduce the IaaS Layer used: OpenStack. This article begins with an overview, and subsequent articles will provide detailed introductions to individual OpenStack components. Reference: This series will extensively use OpenStack's own documentation as references and image sources. For those who want to understand OpenStack concepts better, you can read the official documentation directly. What is OpenStack? To discuss what OpenStack is, we can look at it from three perspectives: Software, Community, and Group. Software: OpenStack is essentially a software suite capable of providing private and public cloud services, covering various use cases such as general enterprises, telecommunications providers, and high-performance computing. From a software perspective, OpenStack consists of multiple microservices, and users can combine these services according to their application scenarios to meet their needs. These services are primarily provided via REST APIs, and Software Development Kits (SDKs) for different programming languages are also available. This software can be installed using official tarballs, and pre-packaged versions are available in the package management tools of major Linux distributions. OpenStack Software Map: Community: Beyond the software, OpenStack is also a massive community whose goal is: [...]

From Bare Metal to Cloud: OpenStack Nova Introduction 2

In the previous article, we introduced Nova's features and usage. This article will continue by introducing Nova's architecture. OpenStack Nova System Architecture: Nova consists of multiple server processes, each performing different functions. The user-facing interface is a REST API, while internal Nova components communicate via RPC. The API server handles REST requests, which usually involve database reads/writes or sending RPC messages to other Nova services, and generates corresponding REST responses. RPC message passing is accomplished through oslo.messaging, an RPC message abstraction layer shared by OpenStack components, allowing them to ignore the underlying RPC implementation. Most major Nova components can run on multiple servers and have a manager listening for RPC messages to achieve high availability and load balancing. A major exception is nova-compute, which runs as a single process on each hypervisor it manages (using VMware or [...]

From Bare Metal to Cloud: OpenStack Nova Introduction 1

Yesterday's article gave readers a general introduction to the OpenStack architecture. Today, we will begin a deep dive into each component. We start with the most core functionality provided by OpenStack: the Compute Service, which is the component responsible for launching Virtual Machines—Nova. What is Nova? Nova is a project within OpenStack responsible for providing a way to provision computing machines. Currently, it primarily supports both virtual machines and bare metal hosts (via Ironic). Nova runs as a set of daemons on top of existing Linux servers to provide this service. Later sections will introduce the tasks each Nova daemon is responsible for. Currently, Nova's basic functionality requires the following OpenStack services to be used together: Keystone, Glance, Neutron, Placement. Placement was not introduced in the previous article, so here is a quick overview. Placement is a service split off from Nova, primarily responsible for tracking available resources of different classes and their usage, such as CPU, RAM, etc. For users: You can create and manage compute resources through tools or APIs provided by Nova. Using Nova [...]

From Bare Metal to Cloud: High-Level Architecture Introduction

In the previous articles, we introduced the NIST definition of cloud computing. Starting today, we will get down to business and teach you step-by-step how to build a cloud from bare metal. First, I will briefly introduce the architecture I plan to build. IaaS Layer: The IaaS Layer for this cloud will use OpenStack. The reasons for this choice are as follows: It is currently the most common private cloud solution; it is mature, has many users, and scales from small to large. Open Source, Open Source, Open Source: Open source is the most important, so I listed it three times. Because it is open source, the software is easy to obtain and free to use (Apache License). Readers can easily follow my steps for implementation. PaaS Layer: The PaaS Layer will use Kubernetes. The reasons for this choice are as follows: It is currently the most common container orchestration platform. All major public clouds provide managed services. Its basic functions are mature, it has many users, and it scales from small to large. Open Source, Open Source, Open Source: I believe many readers already have experience with Kubernetes. This series will also teach how to set up Kubernetes on OpenStack and use [...]

From Bare Metal to Cloud: Cloud Definition 3

Yesterday we introduced the three service models of cloud computing. Today, we continue with the cloud definition to introduce the four deployment models of cloud services. Deployment Models: The cloud deployment models defined by NIST mainly include the following: Private Cloud, Public Cloud, Hybrid Cloud, Community Cloud. Some people also refer to Hybrid Cloud as Multi-Cloud. The introduction here also cites translations from the National Chung Hsing University Senior Learning Network. Private Cloud: The cloud infrastructure is operated solely for an organization. It may be managed by the organization or a third party and may exist on-premise or off-premise. Private clouds possess the elasticity of public cloud environments while being subject to specific network and user restrictions. Since data and programs are managed within the organization, they are less affected by network bandwidth, security concerns, and regulatory restrictions, allowing cloud providers and users to better control the infrastructure and improve security and flexibility. Simply put, a cloud built by a company for itself is usually a private cloud, and the users are typically other development departments within the company. Community Cloud: The cloud infrastructure is shared by several organizations and supports a specific community that has shared concerns (e.g., mission, security requirements, policy, and compliance considerations). It may be managed by the organizations or a third party and may exist on-premise or off-premise. This model is currently less common. Public Cloud: The cloud infrastructure is made available to the general public or a large industry group and is owned by an organization selling cloud services. In addition to elasticity, it offers cost-effectiveness. This allows public cloud users to save on the costs and technical requirements of managing physical machines, data centers, power, and cooling facilities. Furthermore, "public" does not mean user data is viewable by anyone; cloud providers usually implement access control mechanisms for users. Public cloud is also the most widely recognized form of cloud computing. Currently, the three major international public cloud providers are AWS, Google GCP, and Microsoft Azure, with other smaller-scale providers like Oracle Cloud, Tencent, Alibaba, IBM Cloud, etc. Hybrid Cloud: The cloud infrastructure is a composition of two or more clouds (private, community, or public) that remain unique entities but are bound together by standardized or proprietary technology that enables data and application portability. In this model, users typically outsource non-critical business information to be processed on a public cloud while maintaining control over sensitive internal services and data. Nowadays, to avoid putting all their eggs in one basket, some vendors have started planning to use multiple public clouds simultaneously to prevent service interruptions caused by issues with a single provider. Setting up a hybrid cloud usually requires engineers to have a certain understanding of each cloud platform, and it is harder to use proprietary services of individual public clouds because applications need to operate normally across different clouds. These are the four cloud deployment models defined by NIST. Summary: Cloud deployment models should be as easy to understand as the essential characteristics. I believe many readers have experience using public clouds. This Ironman competition will focus on the concept of private clouds, leading everyone step-by-step from a physical server [...]

From Bare Metal to Cloud: Cloud Definition 2

Yesterday we introduced the five essential characteristics of cloud computing. Today, we continue with the cloud definition to introduce the three service models of cloud services. Service Models: Cloud service models are mainly divided into three types: Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS). We will refer to them as IaaS, PaaS, and SaaS in the following introduction. I will cite translations from the National Chung Hsing University Senior Learning Network here. IaaS: "Infrastructure as a Service" is a collection of virtualized hardware resources and related management functions. Through virtualization technology, resources such as computing, storage, and networking are abstracted to achieve internal process automation and resource management optimization, thereby providing dynamic and flexible infrastructure services to the outside world. Consumers at this layer use "basic computing resources" such as processing power, storage space, network components, or middleware. They can also control operating systems, storage, deployed applications, and firewalls, load balancers, etc., but do not control the underlying cloud infrastructure; instead, they directly enjoy the convenient services brought by IaaS. Taking major cloud service providers as examples, the following services fall under IaaS: AWS: EC2, VPC; GCP: GCE; Azure: VM, Block Storage. PaaS: "Platform as a Service" provides an environment for developing, running, managing, and monitoring cloud applications. It can be described as optimized "cloud middleware." A good platform layer design can meet cloud requirements for scalability, availability, and security. Consumers at this layer can use programming tools provided by the platform provider to build their own applications on the cloud architecture. Although they can control the environment for running applications (and have some control over the host), they do not control the operating system, hardware, or the underlying network infrastructure. The Kubernetes that everyone often hears about is generally categorized as PaaS (Container as a Service), as are various managed services like databases. Taking major cloud service providers as examples, the following services fall under PaaS: AWS: [...]

From Bare Metal to Cloud: Cloud Definition 1

Preface: Hello everyone, I'm Gene. If you've participated in the Cloud Native Taiwan User Group, you've probably heard of me. This is my first time participating in the Ironman competition, encouraged by community members to take the plunge. My topic is "From Bare Metal to Cloud — Building a Cloud in 30 Days." This competition will start with cloud concepts, leading readers from understanding cloud concepts to OpenStack architecture and deployment methods, and finally to Kubernetes architecture and how to deploy Kubernetes on OpenStack. Overall, this topic will guide students step-by-step from a simple Linux machine to building their own private cloud. Cloud Definition: Usually, the definition of cloud computing is based on "The NIST Definition of Cloud Computing." NIST defines cloud computing from three different perspectives: Essential Characteristics, Service Model, and Deployment Model. Essential Characteristics: There are five essential characteristics of cloud computing, which are also its core concepts. They include: On-demand Self-service: Consumers can unilaterally provision computing capabilities, such as server time and network storage, as needed automatically without requiring human interaction with each service provider. Broad Network Access: [...]

MicroStack — Build an OpenStack Environment in 30 Minutes

In recent years, many people still believe that OpenStack is difficult software to install, but that is not the case. Since the emergence of projects like Kolla-Ansible, TripleO, and OpenStack-Ansible, installing and configuring OpenStack has actually become very easy. This time, I want to introduce a project that further simplifies OpenStack installation: MicroStack. What is MicroStack? An OpenStack Environment in 2 commands. MicroStack is a project that allows you to generate a basic OpenStack environment with just two commands. It significantly lowers the barrier to entry for using OpenStack. It has the following features: Fast Installation: I have personally tested it; on a machine with 4 cores, 16GB RAM, and a 100GB SSD, it takes about 30 minutes in total to set up a MicroStack installation. The installation speed is very fast. Upstream: The OpenStack installed by MicroStack uses unmodified upstream OpenStack code, so there's no need to worry about system instability caused by vendor-added features. Complete: The environment built by MicroStack includes most major OpenStack components, including: Keystone, Nova, Glance, Neutron, Cinder [...]

How OpenStack nova-scheduler works

Recently at work, I've been looking at a lot of OpenStack Nova code for various reasons, so I plan to write some articles to record how Nova works. The first article will start with nova-scheduler. It will lead readers from the code level to understand how the conductor calls the scheduler and how the scheduler makes decisions. This article is based on the latest stable version at the time of writing, Victoria. Introduction to nova-scheduler: nova-scheduler is designed as a plugin-based scheduler, allowing users to write their own scheduler drivers according to their needs. In Nova, the filter scheduler provided in the Nova codebase is enabled by default. The principle of the Filter scheduler is very simple: it basically passes all hosts through a series of "filters." If a host does not meet the filter criteria, it is eliminated; if it does, it passes through and enters the final selection pool. These hosts that pass the filters will then [...]

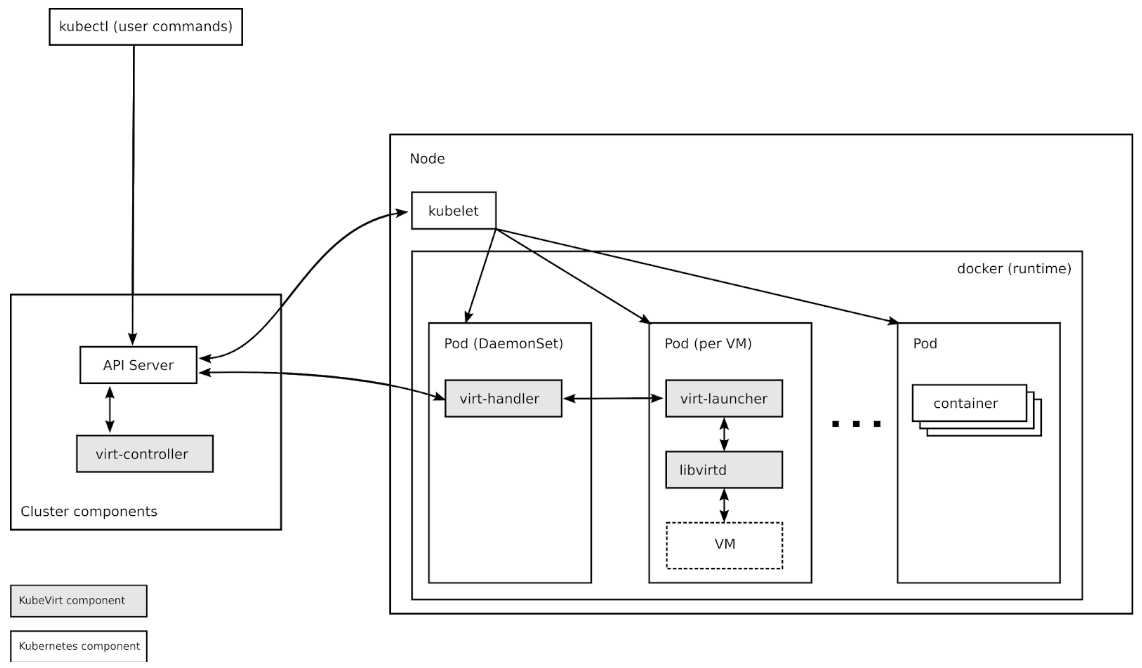

Introduction to KubeVirt Architecture

A few days ago, I gave a long-awaited talk on KubeVirt 101 at the Cloud Native Taiwan User Group. The more I play with it, the more interesting I find this project. Here, I plan to slightly condense the content of the KubeVirt 101 talk, focusing on the architecture. I'm encouraging everyone to try this operator for running VM workloads on Kubernetes. Basic Introduction: Official text: "KubeVirt is a virtual machine management add-on for Kubernetes. The aim is to provide a common ground for virtualization solutions on top of Kubernetes." Simply put, KubeVirt is a Kubernetes operator that allows users to run virtual machine applications on Kubernetes. This enables users to run applications that haven't been containerized yet or require a specific kernel. The biggest advantage is that in terms of operations, all concepts are the same as standard Kubernetes containers [...]